We will show you how to safely scrape Google Search results. This is done by using anonymous proxies and elite proxies. Our aim is to help you avoid detection and blocks.

We will give you practical steps to bypass rate limits. This way, you can avoid getting a 429 too many requests response or an IP ban. This guide is for U.S.-based developers and data teams doing SERP scraping for SEO, market research, or product development.

In this article, we cover the basics of SERP scraping. We also talk about legal and ethical boundaries. Plus, we discuss how to choose the right proxy, whether residential or datacenter.

We explain how to set up your technical tools. We also share strategies to handle rate limits and CAPTCHA. You’ll learn how to scrape from different locations and scale your operations.

We emphasize the use of anonymous proxies and elite proxies. These tools help distribute requests and bypass rate limits while staying compliant. We also discuss how to avoid api throttling and 429 too many requests errors. And, we share ways to lower the risk of an IP ban during sustained scraping operations.

Key Takeaways

- We use anonymous proxies and elite proxies to distribute requests and reduce detection.

- Proper setup and rate limiting help avoid api throttling and 429 too many requests errors.

- Choosing between residential and datacenter proxies affects reliability and cost.

- Ethical and legal boundaries guide safe scraping practices for U.S. teams.

- Planning for distributed requests and load testing improves long-term scraping success.

Understanding SERP Scraping

We start by explaining the main idea of collecting search engine results automatically. SERP scraping gets rankings, snippets, and more. This helps teams see how visible they are over time.

What is SERP Scraping?

SERP scraping is about getting data from search engine results pages. It helps us understand organic and paid positions, and even rich results. It’s used for SEO tracking, competitor analysis, and more.

Why Is It Important?

Accurate SERP data is key for measuring visibility and checking SEO plans. It shows changes in search results and how algorithms affect traffic.

With this info, we can focus on the right content, keep an eye on competitors, and make technical improvements. Good data leads to better decisions in marketing and engineering.

The Role of Proxies in Scraping

Proxies hide our IP and spread out traffic. This way, no single IP gets too much traffic. It helps avoid getting banned and keeps requests looking natural.

Choosing the right proxy is important. It affects how well we scrape and how likely we are to get caught. Proxies help us get around limits and avoid being blocked when we make many requests at once.

Legal and Ethical Considerations

We must balance technical goals with clear legal and ethical guardrails before we scrape search results. Respecting site rules and user privacy keeps projects sustainable. This reduces exposure to enforcement actions like account suspension or an ip ban.

Compliance with search engine policies

We review Google’s Terms of Service and robots.txt guidance before any crawl. These documents set limits on automated access and outline acceptable behavior. Failure to follow them can trigger legal notices, account suspension, or an ip ban from search endpoints.

We design scrapers to avoid rapid request bursts that mimic abusive traffic. Implementing sensible pacing prevents 429 too many requests responses. This lowers the chance of escalations involving api throttling or service blocks.

Respecting copyright and data privacy

We treat scraped content as potentially copyrighted. Publisher snippets, images, and rich results often belong to third parties. Reusing that material without permission risks infringement claims.

We minimize collection of personally identifiable information and apply anonymization when retention is necessary. Privacy laws such as GDPR and CCPA can impose obligations when SERPs include names, email fragments, or location clues. Storing only what we need and securing data at rest reduces legal exposure.

Ethical scraping versus malicious scraping

We draw a clear line between legitimate research or business intelligence and harmful activity. Ethical scraping uses rate limits, honors robots.txt, and shares intent when required. Malicious scraping involves mass data theft, credential stuffing, or patterns that cause service disruption.

We avoid tactics that hide intent or overwhelm endpoints. Using proxies to distribute load can be a valid technical measure, yet it must be paired with legal compliance and transparent policies. Poorly designed proxy usage may provoke api throttling measures, 429 too many requests errors, or an ip ban.

We document our approach, monitor request patterns, and respond quickly to complaints. That combination keeps our work robust, defensible, and aligned with industry expectations.

Choosing the Right Proxies

Before we start scraping, we need to understand our proxy options. The type of proxy we choose impacts our success, cost, and ability to avoid rate limits. This is especially true for distributed tasks and load testing.

Types of Proxies: Residential vs. Datacenter

Residential proxies use IPs from internet service providers, like those assigned to homes. They are trusted by Google, block less often, and cost more. They’re great for scraping search engine results pages (SERPs) naturally.

Datacenter proxies come from hosting providers and virtual machines. They’re faster and cheaper, perfect for heavy scraping. However, Google flags them more, increasing detection risk.

Mobile proxies mimic carrier networks, offering the highest anonymity. They’re ideal for targeting mobile-specific results or needing top anonymity.

Factors to Consider When Selecting Proxies

Success rate against Google is our first concern. We look at real-world block and challenge rates to meet our goals.

IP pool size and geographic diversity are key for scraping in different locations. A large pool helps avoid reuse and supports targeting various regions.

Concurrent connection limits and session persistence affect how many threads we can run. Stable sessions are crucial for maintaining search context during long crawls.

Authentication methods, latency, bandwidth caps, and cost per IP are important. We also consider provider reputation and support for rotation and session control for load testing and distributed requests.

Recommended Proxy Providers

We test several top providers to see how they perform in real-world scenarios. Bright Data (formerly Luminati), Smartproxy, Oxylabs, Storm Proxies, and NetNut are often mentioned in reviews.

When evaluating providers, we ask for trial credits and test their SERP scraping success. We also check their support for geo-targeting, session rotation, and persistent connections.

For projects where avoiding rate limits is crucial, we choose elite proxies. They offer high anonymity and stable sessions. This helps reduce detection and boosts performance during load testing and scaling scraping operations.

Setting Up Your Scraping Environment

We start by setting up a solid environment for scraping tasks. A clean setup cuts down on errors and helps avoid hitting rate limits. This makes our tests more reliable.

We pick a programming environment like Python or Node.js. For making HTTP requests, we use requests in Python or axios in Node. For simulating browsers, we choose tools like Puppeteer, Playwright, or Selenium.

Tools for managing proxies handle rotation and authentication. We also use systems like ELK or Grafana to track errors and performance. Docker helps us create the same environment on any machine.

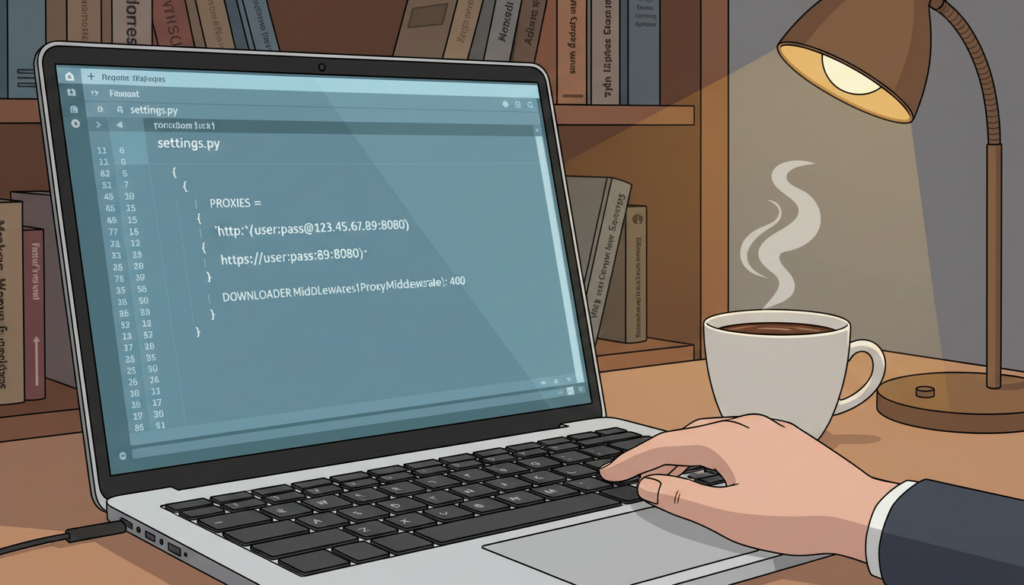

Configuring your proxy settings

We set up proxy settings with secure login options. These include username/password, IP whitelisting, and tokens. We switch proxies for each request or session, depending on the load.

Using connection pooling makes our requests more efficient. For secure connections, we enable TLS/SSL passthrough. We choose between SOCKS5 and HTTP(S) based on speed and protocol needs.

We add timeouts and retry logic to handle failures without hitting limits. We structure retries with exponential backoff to avoid rate limits.

Ensuring browser compatibility

We prefer headless Chrome or Chromium for realistic interactions. We use tools like Puppeteer or Playwright to drive them. We rotate user-agents and manage browser fingerprints to avoid detection.

We apply proxy settings at browser launch for consistent routing. We test our scrapers under simulated loads to see how they handle rate limits. By spreading requests across proxy pools, we avoid hitting rate limits.

Creating Your Scraping Script

We start by picking the right language and setting up a clear code structure. This approach prevents common mistakes and helps us avoid hitting rate limits. It also reduces the chance of getting blocked by api throttling or 429 too many requests errors.

Choosing a Programming Language

Python, Node.js, or Go are top choices for SERP tasks. Python is great for quick development and has a wide range of tools like requests and BeautifulSoup. Node.js is perfect for browser automation with tools like axios and Puppeteer. Go is ideal for large-scale scraping due to its high concurrency and low latency.

Each language has its own strengths. Python is best for quick prototypes and parsing HTML. Node.js offers easy access to headless Chromium and event-driven I/O. Go excels in efficient concurrency, which helps avoid api throttling.

Basic Code Structure for SERP Scraping

We break down our code into different parts. These include request orchestration, proxy rotation, and rate limiting. We also have response parsing, data validation, and error handling for 429 and network issues.

Request orchestration manages how requests are sent and received. Proxy rotation changes the outgoing IP to avoid rate limits. Rate limiting middleware controls delays to prevent api throttling and 429 errors.

Response parsing deals with both static and dynamic content. For dynamic pages, we use headless browsers or Playwright. We keep cookies and session tokens to maintain state and avoid retries.

Common Libraries and Frameworks

We use well-known libraries to make development faster and more reliable. Here’s a quick look at some popular tools for SERP scraping.

| Language / Tool | Use Case | Key Strength |

|---|---|---|

| Python — requests, aiohttp, BeautifulSoup, lxml | Lightweight requests, async scraping, fast HTML parsing | Easy syntax, rich parsing options, strong community |

| Python — Selenium, Playwright | Rendering JS, complex interactions, session handling | Robust browser automation, good for dynamic SERPs |

| Node.js — axios, node-fetch, Cheerio | HTTP clients and fast HTML parsing | Event-driven I/O, seamless JS environment |

| Node.js — Puppeteer, Playwright | Headless browser automation and page rendering | Native control of Chromium, reliable for complex pages |

| Go — net/http, colly | High-performance crawling and concurrent requests | Fast execution, low memory footprint, strong concurrency |

| Auxiliary — Scrapy, ProxyBroker | Frameworks for full pipelines and proxy discovery | Built-in middleware, easy proxy integration |

We add proxy rotation and retry logic to our middleware. This includes exponential backoff for 429 errors and randomized delays to bypass rate limits. When api throttling happens, we reduce concurrency and increase backoff to recover smoothly.

We store session cookies and tokens securely and reuse them to lower authentication overhead. For dynamic content, we prefer Playwright or Puppeteer with pooled browser contexts. This way, we can render pages efficiently without starting a full browser process for each request.

Implementing Rate Limiting

We need to control how many requests we send to protect servers and keep our scraping sustainable. Rate limiting stops overload and keeps us within expected patterns. APIs often throttle traffic when it looks off.

Why this control matters

Too many requests can slow servers, cause errors, or even ban IPs. Setting limits helps avoid 429 errors and long-term blocks. It also saves bandwidth and cuts costs from throttling.

Practical techniques to pace traffic

We use exponential backoff for retries after failures. Adding jittered delays makes patterns harder to spot. Token and leaky bucket algorithms manage throughput with bursts.

Setting per-IP and global caps helps avoid hitting limits. Session-based pacing and staggering workers smooth out peaks. Distributing requests across many proxies mirrors organic traffic and limits load.

Tools to monitor and alert

We watch 429 error rates, average latency, and success rates per IP for early signs of throttling. Prometheus and Grafana give us real-time dashboards.

ELK Stack helps us analyze logs and spot trends. Sentry captures exceptions and error spikes. Proxy vendors offer dashboards for health and request volumes.

| Metric | Why It Matters | Recommended Tool |

|---|---|---|

| 429 Error Rate | Shows api throttling or rate limit breaches | Prometheus + Grafana alerts |

| Average Latency | Indicates slow endpoints or overloaded proxies | Grafana dashboards |

| Success Rate per IP | Reveals problematic proxies or bans | ELK Stack for log correlation |

| Request Volume by Worker | Helps balance concurrent load and avoid spikes | Prometheus metrics + provider dashboards |

| Alert Thresholds | Automated triggers to prevent bans | Sentry and Grafana alerting |

To avoid rate limit bypasses, we mix pacing algorithms with wide proxy rotation and monitoring. This approach keeps us resilient, costs predictable, and avoids service interruptions.

Handling CAPTCHAs

We all deal with CAPTCHAs when scraping search results. These tests, like reCAPTCHA v2 and v3, and hCaptcha, check if we’re human. If we send too many automated requests, we might get a 429 error or be banned.

Understanding CAPTCHA Challenges

CAPTCHAs use visual tests and JavaScript to tell humans from bots. They track mouse movements and cookie history. If it looks like a bot, the site might ask for a CAPTCHA or slow down our requests.

Ignoring CAPTCHAs can lead to 429 errors and even an ip ban. It’s important to treat them as part of the site’s defense.

Tools for Bypassing CAPTCHAs

There are automated solvers and human services like 2Captcha and Anti-Captcha. Each has different prices, success rates, and speeds.

We can use full browser automation with tools like Puppeteer. This makes our requests look more like real users. It’s important to choose wisely and have a plan B for when solvers fail.

Best Practices for Avoiding CAPTCHA Triggers

We can make our requests look more natural by randomizing timing and using different user-agents. Keeping sessions open and using good proxies helps too.

We should avoid blocking resources that might trigger CAPTCHAs. If we hit limits, we slow down or pause. If we get a CAPTCHA, we wait, change our proxy, and try again.

| Topic | Approach | Benefits | Risks |

|---|---|---|---|

| Browser Automation | Use Puppeteer or Playwright with full JS and session persistence | Higher realism, fewer CAPTCHAs, consistent cookies | Higher resource use, setup complexity |

| CAPTCHA Solvers | 2Captcha, Anti-Captcha, CapMonster or human-in-loop | Fast solving, simple integration | Cost per solve, varying reliability |

| Proxy Strategy | Rotate high-quality residential or mobile proxies | Reduces ip ban risk, spreads requests | Higher cost, management overhead |

| Rate Controls | Randomized delays and adaptive backoff | Prevents 429 too many requests, avoids throttling | Longer crawl times, complexity in tuning |

| Fallback Flow | Pause, rotate proxy, lower rate, retry | Recovers from CAPTCHAs and avoids ip ban | Requires robust error handling |

Data Extraction Techniques

We share practical steps for extracting data from search results and webpages. Our goal is to use strong methods that combine scraping, headless rendering, and API use. This keeps our pipelines strong and easy to manage.

Parsing HTML Responses

We use top parsers like lxml, BeautifulSoup, and Cheerio to make raw responses useful. CSS and XPath selectors help us get titles, snippets, URLs, and JSON-LD easily. This avoids the need for tricky string operations.

Dynamic pages require us to access the DOM after rendering. We use tools like Playwright or Puppeteer for this. Then, we run parsers on the HTML to catch more data and fix errors faster.

Storing Extracted Data Efficiently

Choosing where to store data depends on how much we have and how we plan to use it. We pick PostgreSQL for structured data, MongoDB for flexible data, S3 for big exports, and BigQuery for analytics. Each has its own role in our pipeline.

We keep schema versions up to date, remove duplicates, and add indexes to speed up queries. Good indexing and storage formats save money and make analysis quicker during load tests.

Working with APIs for Enhanced Data

When possible, we use official APIs like Google Custom Search API. This lowers the risk of scraping and makes data more consistent. We combine API data with scraped records to fill in missing information and check field accuracy.

APIs have limits and costs. We manage these by sending requests in batches, caching responses, and setting up retry logic. If APIs aren’t enough, we use elite proxies for targeted scraping. We do this ethically to avoid rate limit issues.

Throughout our process, we apply rules and checks to ensure data accuracy. This makes our datasets reliable and ready for analysis.

Scraping Multiple Locations

When we target search results across regions, we must treat each location as a distinct data source. Search results change by country, city, and language. To mirror local SERPs, we add geo parameters, set Accept-Language headers, and vary queries for local phrasing.

How to Target Different Regions

We build requests that include regional signals such as the uule parameter for Google, country-specific query terms, and the right Accept-Language header. Small changes in query wording can yield different local rankings. So, we test variants for each city or state.

Utilizing Geo-Targeting with Proxies

We select proxies that match our target locations so requests appear to come from the intended region. Residential proxies and ISP-assigned IPs deliver higher trust scores for local results. Many providers let us pick city-level endpoints, which simplifies geo-targeting and ensures Google returns localized SERPs.

Challenges of Multi-Location Scraping

We face operational hurdles when scaling a geographically diverse proxy pool. Maintaining many regional IPs increases cost and complexity, while latency can slow crawls. Regional CAPTCHAs often appear more frequently, which forces us to rotate proxies and integrate human-solvers or smart retry logic.

Legal rules vary by country, so we map data protection requirements before scraping each market. Rate policies differ per region, so we design regional throttles to bypass rate limits and avoid triggering local IP blocks.

Batch scheduling helps us control load and keep behavior predictable. We group requests by time zone, apply per-region rate limiting, and monitor response patterns to adapt proxy selection. These methods improve reliability when performing multi-location scraping at scale.

Testing and Troubleshooting

We test and fix problems to keep scraping pipelines running smoothly. This phase focuses on common failures, how to debug them, and steps to take when issues arise.

Common issues include 429 too many requests, CAPTCHAs, and blocked IPs. These problems can be caused by too many requests, automated behavior, or changes in the website’s structure. Timeouts and pages that only load with JavaScript are also common issues.

We start by testing problems locally before making big changes. First, we try the same request from one IP, then from many. We check the request and response headers for any clues.

Logging full HTML responses helps us spot problems. We use browser devtools to look at the DOM and network timing. We also track user-agent and cookie behavior.

Granular logs are key. We log proxy used, latency, response code, and the raw body for each request. This helps us find the cause of problems like 429 too many requests.

When debugging, we change one thing at a time. If the problem goes away, we know what caused it. We use canary runs to test small groups of pages before making changes.

We do controlled load testing to avoid surprises. Tools like Apache JMeter and k6 help us test traffic slowly. This helps us see how systems handle pressure before real traffic hits.

For recurring problems like ip bans, we have a runbook. The runbook includes steps like rotating proxies and reducing concurrency. We schedule regular checks to make sure everything is stable.

Here are some quick tips for troubleshooting:

- Reproduce the error locally with a single IP and with the proxy pool.

- Inspect headers, cookies, and full HTML responses for anomalies.

- Log per-request metadata: proxy, latency, response code, and body.

- Isolate one variable at a time: proxy, user-agent, then headers.

- Run load testing with JMeter or k6 and perform canary runs.

- Keep a runbook for 429 too many requests and ip ban recovery steps.

We keep improving our fixes and testing. This approach helps us respond faster and keeps data collection consistent.

Adapting to Algorithm Changes

Google updates its ranking signals and SERP layouts often. These changes can break parsers and alter how we detect content. It’s crucial to monitor algorithms closely to catch these changes early.

We check live SERPs and sample results across different areas. Regular checks help us spot important DOM edits. When we find differences, we review and decide if we need to update our methods.

Our scraping strategy is based on modular parts. We create parsers that keep extraction rules separate from request logic. This makes it easier to update without redeploying the whole scraper. We also use automated DOM diff detection to quickly find layout changes.

We keep our rate limiting and fingerprinting flexible. Adjusting how often we make requests helps avoid being blocked by APIs. If we start getting blocked more, we look at our proxy quality and distribution to avoid unsafe ways to bypass limits.

We test our scraping in staging against live SERPs. These tests help us catch problems early. We also simulate distributed requests at a small scale to make sure everything works before we go live.

We stay updated by following reliable sources. Google’s Official Search Central blog and sites like Moz and Search Engine Journal keep us informed. We also check developer forums and GitHub projects for technical details.

We get updates from changelogs for tools like Puppeteer and Playwright. These updates can affect how we render and intercept content. Proxy providers also send us notices when things change, helping us adjust our requests.

| Area | Why It Matters | Action Items |

|---|---|---|

| Structure Changes | Alters selectors and extraction accuracy | Run DOM diffs, update modular parsers, retest |

| Ranking Volatility | Signals algorithm updates that affect SERP content | Increase monitoring cadence, compare historical SERPs |

| Rate Controls | Can trigger api throttling and blocks | Tune rate limiting, emulate human pacing, log throttles |

| Proxy Health | Poor proxies raise block rates and skew results | Assess provider advisories, rotate pools, test geo coverage |

| Tooling Updates | Changes in headless browsers affect rendering | Track changelogs, run compatibility tests, patch quickly |

| Traffic Pattern Tests | Helps validate behavior under distributed requests | Simulate distributed requests at small scale, monitor metrics |

Ensuring Data Quality

We focus on keeping our SERP datasets reliable and useful. We check for errors right after we crawl data. This way, we avoid big problems later and don’t have to make too many requests.

We use different ways to make sure our data is correct. We check URLs for silent errors and remove duplicate records. We also make sure the data fits the expected format and compare it to known samples.

To clean the data, we make sure everything is in the right format. We remove extra spaces and make dates and numbers consistent. Adding extra information helps us find where problems come from.

We use tools like Apache Airflow or Prefect to manage our data. This makes it easier to track changes and fix issues. It also helps us see how cleaning data affects our results.

We have rules to catch any mistakes in our data. If we find a problem, we review it by hand and update our methods. This keeps our data accurate without needing to scrape everything again.

For analyzing our data, we use Python and SQL. We also use Looker and Tableau for visualizing trends. We have dashboards in Grafana to show how our data is doing.

We use special tools to spot sudden changes in our data. This helps us avoid getting blocked by rate limits. We only make extra requests when it’s really needed.

We have a simple checklist for our data. We check for the right format, remove duplicates, and add extra information. This keeps our data consistent and saves us time.

Scaling Your Scraping Efforts

As our project grows, we need to scale without breaking patterns or getting blocked. Scaling scraping means making technical choices that balance speed, cost, and reliability. We explore ways to increase crawling capacity while keeping data quality and access safe.

When to expand operations

We scale when we need more data, like more keywords or higher refresh rates. Monitoring SERPs in real-time and needing to do more things at once are signs to grow. Business needs often drive the need for more coverage before we can adjust technically.

Strategies for efficient growth

We prefer horizontal scaling with worker pools to keep tasks separate and stable. Sharding by keyword or region helps avoid conflicts and makes retries easier. Using message queues like RabbitMQ or Kafka helps manage distributed requests and handle spikes.

Container orchestration with Kubernetes lets us scale based on load. Having a big proxy pool spreads out traffic and lowers the chance of getting banned. We carefully manage rate limits across workers to avoid getting blocked by APIs.

Managing resources effectively

We save money by comparing proxy costs to the value of the data we get. Caching common queries and focusing on important keywords reduces unnecessary requests. Setting a retry budget stops retries from getting too expensive and raising detection risks.

Regular load testing with tools like k6 or Apache JMeter checks how we perform under heavy traffic. This helps us find and fix problems before they cause issues in production.

| Scaling Area | Approach | Benefit | Tool Examples |

|---|---|---|---|

| Task Distribution | Worker pools with sharding by keyword/region | Reduces contention; easier retries | Celery, Kubernetes Jobs |

| Traffic Coordination | Message queues to buffer and sequence jobs | Smooths bursts; enables backpressure | RabbitMQ, Apache Kafka |

| Proxy Management | Large proxy pools with rotation and health checks | Lowers ban risk; enables distributed requests | Residential proxy providers, in-house pools |

| Rate Control | Centralized rate limiting and per-worker caps | Avoids API throttling and failed batches | Envoy, Redis token bucket |

| Performance Validation | Periodic load testing and chaos drills | Identifies bottlenecks before outages | k6, Apache JMeter |

| Cost Optimization | Caching, prioritization, and retry budgets | Improves ROI on proxy and compute spend | Redis, Cloud cost monitoring |

Staying Compliant with Data Regulations

We need to balance our scraping needs with legal duties when collecting search results. Laws like GDPR and CCPA limit how we process personal data. They also give rights to individuals. Knowing these rules helps us avoid legal trouble and protect our users.

Understanding GDPR and CCPA

GDPR is the European law that requires us to have a legal reason for processing data. It also limits our purpose and gives people the right to access and delete their data. Breaking these rules can lead to fines and investigations.

CCPA is a U.S. law that focuses on consumer rights in California. It requires us to give notice, allow opt-out, and delete data upon request. Since U.S. laws vary, we watch both federal and state actions closely.

Best Practices for Compliance

We try to collect as little personal data as possible. When we do need personal data, we anonymize or hash it. We also keep a document explaining why we collect it and how long we keep it.

We have systems in place for people to opt-out and remove their data. For big projects, we get legal advice and do privacy impact assessments. This helps us avoid legal trouble, like using proxies to bypass rate limits.

We have rules for when to stop scraping and how to notify people. These rules help us stay safe and show we’re responsible to regulators.

Monitoring Legal Changes

We keep an eye on updates from the European Data Protection Board, the FTC, and state regulators. We also subscribe to legal newsletters and privacy services. This way, we catch new rules early.

We automate checks in our pipeline, like data audits and privacy impact assessments. These steps help us stay up-to-date with changing laws. They also let us respond quickly when rules change.

Real-World Applications of SERP Scraping

We use SERP scraping in many ways to help businesses make smart choices. It supports market research, competitor analysis, SEO, and targeted outreach.

Market Research and Competitor Analysis

Tracking how competitors rank is key. SERP scraping helps us see these changes. It shows us where our content might be lacking.

It also helps us see how well brands like Starbucks or Home Depot do in local markets.

We look at product mentions and prices to compare offers. This helps us set prices and position our products better.

SEO and Digital Marketing Strategies

Scraped SERP data helps us track rankings and see how we do in special features. This info guides our content and paid search plans.

To monitor more often, we use special proxies and spread out our requests. This way, we avoid getting banned and can spot drops fast.

Lead Generation and Outreach

Scraping SERPs helps us find niche directories and local listings. It’s great for finding leads in real estate and professional services.

We follow the rules and respect sites when we get contact info. This keeps our outreach ethical and compliant.

Conclusion: Best Practices for Safe SERP Scraping

We began by discussing legal and ethical guidelines for scraping search results. Our guide includes using residential or elite proxies for privacy and stability. It also covers proxy rotation and data validation to keep information accurate.

We also talked about creating realistic browser automation to avoid CAPTCHA issues. This helps us avoid getting blocked by rate limits.

Recap of Key Takeaways

Before scraping data, we need to know about laws like GDPR and CCPA. Elite proxies or high-quality residential providers are best for sensitive tasks. We should also use strong rate limiting and retry logic to avoid getting blocked.

Monitoring for api throttling and setting up alerts helps catch problems early. This reduces the risk of getting banned.

Final Recommendations for Success

Start with small pilots to test proxy providers and see how they perform. Keep your parsers flexible for quick updates. Focus on privacy and data storage to ensure accuracy.

Be cautious when trying to bypass rate limits. Find a balance between efficiency and respect for the services you’re using. Invest in monitoring to quickly spot api throttling or 429 errors.

Future Trends in SERP Scraping

Expect more defenses against headless browser fingerprinting and stricter laws on automated data collection. Managed data APIs might reduce the need for scraping. Proxy services will improve with better geo-targeting and session management.

To stay ahead, follow technical blogs, vendor updates, and legal resources. This way, our strategies can adapt to the changing landscape.

FAQ

What is the safest way to scrape Google SERPs without getting blocked?

Use high-quality proxies to spread out your requests. Set strict limits and random delays to avoid being blocked. Use full browser automation to act like a real user. Rotate user agents and cookies often.

Watch for 429 errors and CAPTCHAs. Start small and grow slowly to avoid getting banned.

Should we use residential, datacenter, or mobile proxies for SERP scraping?

It depends on what you need. Residential and mobile proxies are safer and less likely to get blocked. Datacenter proxies are faster but riskier.

For big projects, mix proxy types. Use elite proxies for the most important tasks.

How do we handle 429 Too Many Requests and API throttling?

Slow down when you get 429 errors. Use smart backoff and rate limits. Spread out your requests with a big proxy pool.

Limit how many requests each proxy can handle. Watch for 429 trends and alert for rate changes or proxy swaps.

What configuration patterns do you recommend for proxy rotation?

Rotate proxies per session or request, depending on your needs. Use sticky sessions for tasks needing cookies. Rotate for simple GETs.

Use username/password, IP whitelisting, or tokens for authentication. Manage connections and timeouts to avoid too many retries.

How do we reduce CAPTCHA frequency and handle CAPTCHAs when they appear?

Use top-notch proxies and realistic browser automation. Keep sessions open and use random timing. Make sure to load all resources.

When CAPTCHAs pop up, pause and swap proxies or sessions. For big jobs, use CAPTCHA-solving services carefully. Prevent CAPTCHAs whenever possible.

Which tools and libraries are best for building a scraper that handles dynamic SERPs?

For browser-based scraping, choose Puppeteer or Playwright in Node.js. Playwright or Selenium in Python works well too. For HTTP scraping, use requests, aiohttp, or Go’s net/http.

Combine parsers like BeautifulSoup or lxml for data extraction. Use proxy management libraries and Docker for reproducible environments.

How can we target SERPs for different regions and cities reliably?

Use geo-located proxies and set locale headers. Include the required cities or ISPs in your proxy pool. Apply regional rate limits to avoid bans.

Test results in each location and account for latency and CAPTCHA patterns.

What storage and data quality practices should we follow after scraping?

Store data with metadata like timestamp and proxy ID. Use schema validation and deduplication. Choose the right storage for your needs.

Build ETL pipelines and monitor data quality. This helps avoid re-scraping and rate limiting.

How do we test and debug scraping failures like partial renders, timeouts, or DOM changes?

Reproduce issues locally with the same settings. Log headers and HTML snapshots. Use devtools to inspect the DOM.

Add detailed logs for each request. Run tests to find rate-limiting issues and adjust settings.

What compliance and legal safeguards should we implement when scraping SERPs?

Check Google’s Terms of Service and robots.txt. Minimize PII collection and anonymize data. Document your processes and keep records.

Implement opt-out and deletion workflows. Consult legal experts for big projects. Following GDPR and CCPA reduces legal risks.

When should we scale our scraping infrastructure and how do we avoid amplified detection?

Scale when your needs grow. Use worker pools and message queues for horizontal scaling. Autoscale containers for efficiency.

Coordinate rate limits and shard by region or keyword. Expand proxy pools as needed. Test to avoid detection.

Are there alternatives to scraping for SERP data?

Yes. Use official APIs or third-party providers for legal and easy rate limiting. But they have limits. Combine APIs with selective scraping for full coverage.

Which proxy providers do you recommend for high-success SERP scraping?

Check out Bright Data, Oxylabs, Smartproxy, NetNut, and Storm Proxies. Each has different features. Test them live and measure success rates before choosing.

How do we stay up to date with algorithm and layout changes that break scrapers?

Watch for changes in SERP structure and ranking. Use automated DOM diffs and continuous integration tests. Follow Google and industry sources.

Keep your scraper flexible and ready for updates. Deploy fixes quickly when needed.