We use proxies, especially rotating ones, to boost Selenium-driven automated browser testing. This is key for high-volume data extraction. Integrating Selenium proxies with ip rotation is crucial for reliable automated scraping at scale. Rotating proxies help avoid IP bans and make traffic look like it comes from many users.

This article is for developers, QA engineers, data teams, and DevOps in the United States. We cover Selenium automation at scale. It includes 15 sections on setup, integration, proxy rotation, session sticky, authentication, and more.

Readers will get practical tips. We’ll share sample configurations, proxy selection, ip rotation, and session sticky methods. You’ll also learn about performance trade-offs in automated scraping.

Key Takeaways

- Rotating proxies and ip rotation are critical to reduce bans during automated scraping.

- Selenium proxies enable distributed, realistic traffic patterns for testing and data extraction.

- We will cover session sticky methods to maintain session state when needed.

- The guide includes setup examples, rotation strategies, and troubleshooting steps.

- Expect practical tips on provider selection and balancing performance with anonymity.

Understanding Selenium and its Capabilities

We introduce core concepts that power Selenium automation. It’s used for testing and automated scraping. The suite scales from single-browser checks to distributed test runs. It’s a strong fit for CI/CD pipelines in Jenkins and GitHub Actions.

What is Selenium?

Selenium is an open-source suite. It includes WebDriver, Selenium Grid, and Selenium IDE. WebDriver controls Chrome, Firefox, Edge, and more. Grid runs tests in parallel across machines. IDE supports quick recording and playback for simple flows.

The project has an active community and works with tools like Jenkins and GitHub Actions. This makes it easy to add browser tests to build pipelines and automated scraping jobs.

Key Features of Selenium

We list the most useful features for engineers and QA teams.

- Cross-browser support — run the same script in Chrome, Firefox, Edge, Safari.

- Element interaction — click, sendKeys, select, and manipulate DOM elements.

- JavaScript execution — run scripts in-page for complex interactions.

- Wait strategies — explicit and implicit waits to handle dynamic content.

- Screenshot capture — record visual state for debugging and reporting.

- Network interception — available through browser extensions or DevTools hooks for deeper inspection.

- Parallelization — use Selenium Grid to speed up large suites and distributed automated scraping tasks.

How Selenium Automates Browsers

We explain the WebDriver protocol and the flow between client libraries and browser drivers. Client bindings in Python, Java, and C# send commands through WebDriver to drivers such as chromedriver and geckodriver.

Those drivers launch and control browser instances. Each session exposes network and client-side signals like cookies, headers, and IP address. This makes using a web driver without network controls potentially identifiable. Session sticky behavior can affect how servers track repeated visits.

Limits and network considerations

We note practical limits: headless detection, complex dynamic JavaScript, and anti-bot measures. Proxies help at the network layer by masking IPs, easing request limits, and supporting session sticky setups for stateful workflows. Combining proxies with Selenium automation reduces some detection vectors and keeps automated scraping efforts more robust.

| Component | Role | Relevant for |

|---|---|---|

| Selenium WebDriver | Programmatic control of browser instances | Browser automation, automated scraping, CI tests |

| Selenium Grid | Parallel and distributed test execution | Scale tests, reduce runtime, manage multiple sessions |

| Selenium IDE | Record and playback for quick test prototypes | Rapid test creation, demo flows, exploratory checks |

| Browser Drivers (chromedriver, geckodriver) | Translate WebDriver commands to browser actions | Essential for any web driver based automation |

| Proxy Integration | Mask IPs, manage session sticky behavior, bypass limits | Automated scraping, privacy-aware testing, geo-specific checks |

The Importance of Proxies in Automated Testing

Proxies are key when we scale automated browser tests with Selenium. They control where requests seem to come from. This protects our internal networks and lets us test content that depends on location.

Using proxies wisely helps avoid hitting rate limits and keeps our infrastructure safe during tests.

Enhancing Privacy and Anonymity

We use proxies to hide our IP. This way, test traffic doesn’t show our internal IP ranges. It keeps our corporate assets safe and makes it harder for servers to link multiple test requests to one source.

By sending browser sessions through proxies, we boost privacy. Our test data is less likely to show our infrastructure. Adding short-lived credentials and logging practices keeps our test data safe.

Bypassing Geographic Restrictions

To test content for different regions, we need proxies in those locations. We choose residential or datacenter proxies to check how content, currency, and language work in different places.

Using proxies from various regions helps us see how content is delivered and what’s blocked. This ensures our app works right across markets and catches localization bugs early.

Managing Multiple Concurrent Sessions

Running many Selenium sessions at once can trigger server rules when they share an IP. We give each worker a unique proxy to spread the load and lower the risk of being slowed down.

Sticky session strategies keep a stable connection for a user flow. At the same time, we rotate IPs across the pool. This balance keeps stateful testing going while reducing long-term correlation risks.

| Testing Goal | Proxy Strategy | Benefits |

|---|---|---|

| Protect internal networks | Use anonymizing proxies with strict access controls | Improved privacy anonymity; masks origin IP |

| Validate regional content | Choose residential or datacenter proxies by country | Accurate geo-targeted results; reliable UX testing |

| Scale parallel tests | Assign unique proxies and implement ip rotation | Reduces chance of hitting request limit; avoids IP bans |

| Maintain stateful sessions | Use sticky IP sessions within a rotating pool | Preserves login state while enabling rotating proxies |

Types of Proxies We Can Use

Choosing the right proxy type is key for reliable automated browser tests with Selenium. We discuss common types, their benefits, and the trade-offs for web scraping and testing.

HTTP and HTTPS Proxies

HTTP proxies are for web traffic and can rewrite headers. They handle redirects and support HTTPS for secure sessions. Luminati and Bright Data are good choices because they work well with WebDriver.

For standard web pages and forms, HTTP proxies are best. They’re easy to set up in Selenium and work well for many tasks. They’re great when you need to control headers and requests.

SOCKS Proxies

SOCKS proxies forward raw TCP or UDP streams. They support authentication and work with WebSocket traffic. Use them for full-protocol forwarding or when pages use websockets.

SOCKS proxies might not have all the features of HTTP proxies. They remove header rewriting, which can improve transparency. Check if your provider supports username/password or token-based access.

Residential vs. Datacenter Proxies

Residential proxies use ISP-assigned IPs, which are trusted. They’re good for high-stakes scraping and mimicking real users. They cost more and might be slower than hosted solutions.

Datacenter proxies are fast and cheap, perfect for large-scale tests. They’re more likely to get blocked by anti-bot systems. Use them for low-risk tasks or internal testing.

Combining residential and datacenter proxies is a good strategy. Use datacenter proxies for wide coverage and switch to residential for blocked requests. This balances cost, speed, and success.

Considerations for Rotating Proxies

Rotating proxies change IPs for each request or session. Adjust pool size, location, and session stickiness for your needs. A bigger pool means less reuse. Spread them out for region-locked content.

Choose providers with stable APIs and clear authentication. For session-based tests, use sticky sessions. For broad scraping, fast rotation is better.

| Proxy Type | Best Use | Pros | Cons |

|---|---|---|---|

| HTTP/HTTPS | Standard web scraping, Selenium tests | Easy WebDriver integration, header control, wide support | Limited to HTTP layer, possible detection on scale |

| SOCKS5 | WebSockets, non-HTTP traffic, full-protocol forwarding | Protocol-agnostic, supports TCP/UDP, transparent forwarding | Fewer app-layer features, variable auth methods |

| Residential proxies | High-trust scraping, anti-bot heavy targets | Better success rates, appear as real ISP addresses | Higher cost, higher latency |

| Datacenter proxies | Large-scale testing, low-cost parallel jobs | Fast, inexpensive, abundant | Easier to block, lower trust |

| Rotating proxies | Distributed scraping, evasion of rate limits | Reduced bans, flexible session control | Requires careful pool and provider choice |

Match your proxy choice to your task. HTTP proxies are good for routine Selenium tests. SOCKS proxies are better for real-time or diverse testing. For tough targets, use residential proxies and rotating proxies with good session control.

Setting Up Python for Selenium Testing

Before we add proxies, we need a clean Python environment and the right tools. We will cover how to install core libraries, configure a browser driver, and write a simple script. This script opens a page and captures content. It gives a reliable base for proxy integration later.

Installing Necessary Libraries

We recommend creating a virtual environment with virtualenv or venv. This keeps dependencies isolated. Activate the environment and pin versions in a requirements.txt file. This ensures reproducible builds.

- Use pip to install packages: pip install selenium requests beautifulsoup4

- If evasion is needed, add undetected-chromedriver: pip install undetected-chromedriver

- Record exact versions with pip freeze > requirements.txt for CI/CD consistency

Configuring WebDriver

Match chromedriver or geckodriver to the installed browser version on the host. Mismatched versions cause silent failures.

- Place chromedriver on PATH or point to its executable in code.

- Use browser Options for headless mode, a custom user-agent, and to disable automation flags when needed.

- In CI/CD, install the browser and driver in the build image or use a managed webdriver service.

| Component | Recommendation | Notes |

|---|---|---|

| Python Environment | venv or virtualenv | Isolate dependencies and avoid system conflicts |

| Libraries | selenium, requests, beautifulsoup4 | Essential for automated scraping and parsing |

| Driver | chromedriver or geckodriver | Keep driver version synced with Chrome or Firefox |

| CI/CD Integration | Include driver install in pipeline | Use pinned versions and cache downloads |

Writing the First Selenium Script

Start with a minimal script to validate the Python Selenium setup and the driver. Keep the script readable. Add explicit waits to avoid brittle code.

- Initialize Options and WebDriver, noting where proxy values will be inserted later.

- Navigate to a URL, wait for elements with WebDriverWait, then grab page_source or specific elements.

- Test locally before scaling to many sessions or integrating rotation logic.

Example structure in words: import required modules, set browser options, instantiate webdriver with chromedriver path, call get(url), wait for an element, extract HTML, then quit the browser.

We should run this script after installing selenium and verifying chromedriver. Once the basic flow works, we can expand for automated scraping. Add proxy parameters in the WebDriver options for scaled runs.

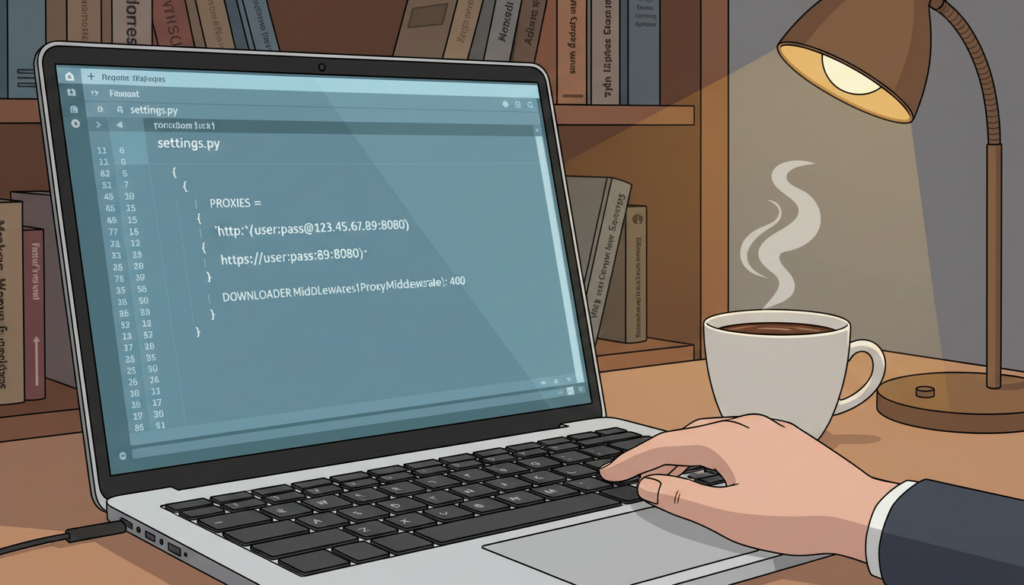

Integrating Proxies into Selenium

We show you how to add proxies to your Selenium projects. This guide covers setting up proxies, using them in webdrivers, and checking they work before big runs. We provide examples to help you avoid mistakes and support session sticky behavior and ip rotation.

Basic proxy configuration in browser options

We set HTTP/HTTPS and SOCKS proxies through browser options. For Chrome, we use ChromeOptions and add arguments like –proxy-server=http://host:port. For Firefox, we set preferences on a Firefox profile: network.proxy.http, network.proxy.http_port, or network.proxy.socks. Use host:port or username:password@host:port for authentication.

When using SOCKS5, we specify the scheme in the option string. If you need to use credentials, use authenticated proxy handlers or extensions to keep them safe.

Applying proxy settings in WebDriver setup

We add proxy info when creating a driver. For modern Chrome, ChromeOptions.add_argument works well for simple proxy entries. Older Selenium versions or cross-browser needs may require DesiredCapabilities and a Proxy object for consistent handling.

We handle PAC files or system proxies by pointing the browser to the PAC URL or by reading system proxy settings into the capabilities. Some environments force system proxies; we read those values and convert them into browser options to maintain expected behavior.

Validating proxy connection

We check if a proxy is active before scaling tests. A common method is to navigate to an IP-check endpoint and compare the returned IP and geo data to expected values. This confirms the proxy is in use and matches the target region.

Automated validation steps include checking response headers, testing geolocation, and verifying DNS resolution. We detect transparent proxies if the origin IP still shows the client address, anonymous proxies if headers hide client details, and elite proxies when the origin IP is fully distinct and no proxy headers are present.

| Check | How to Run | What It Confirms |

|---|---|---|

| IP check | Navigate to an IP API from Selenium script | Shows public IP and helps confirm proxy routing |

| Geo test | Request location-based content or geolocation API | Verifies proxy region and supports ip rotation planning |

| Header inspection | Capture response headers via driver.execute_script or network tools | Detects transparent vs. anonymous vs. elite proxies |

| Session stickiness | Run repeated requests with same cookie/session token | Ensures session sticky behavior with the chosen proxy |

| Load validation | Automate batches of requests before extraction | Confirms stability for large jobs and validates proxy in webdriver at scale |

We suggest automating these checks and adding them to CI pipelines. Validating proxies early reduces failures, makes session sticky designs reliable, and keeps ip rotation predictable for long runs.

Managing Proxy Rotation

We manage proxy rotation to keep automated scraping stable and efficient. Rotating proxies reduces the chance of triggering a request limit. It also lowers IP-based blocking and creates traffic patterns that mimic distributed users. We balance rotation frequency with session needs to avoid breaking login flows or multi-step transactions.

Why rotate?

We rotate IPs to prevent single-IP throttling and to spread requests across a pool of addresses. For stateless tasks, frequent ip rotation minimizes the footprint per proxy. For sessions that require continuity, we keep a stable IP for the session lifetime to preserve cookies and auth tokens.

How we choose a strategy

We pick per-request rotation when each page fetch is independent. We use per-session (sticky) rotation for login flows and multi-step forms. Round-robin pools work when proxy health is uniform. Randomized selection helps evade pattern detection. Weighted rotation favors proxies with lower latency and better success rates.

Implementation tactics

- Per-request rotation: swap proxies for each HTTP call to distribute load and avoid hitting a request limit on any single IP.

- Per-session rotation: assign one proxy per browser session when session continuity matters, keeping cookies and local storage intact.

- Round-robin and random pools: rotate through lists to balance usage and reduce predictability when rotating proxies.

- Weighted selection: score proxies by health, latency, and recent failures; prefer higher-scoring proxies for critical tasks.

Operational safeguards

We run health checks to mark proxies as alive or dead before use. We implement failover so Selenium switches to a healthy proxy if one fails mid-run. We set usage caps per proxy to respect provider request limits and avoid bans.

Tools and providers

Bright Data, Oxylabs, and Smartproxy offer managed rotation and geo-targeting that integrate well with Selenium. Open-source rotators and proxy pool managers let us host custom pools and control ip rotation rules. Middleware patterns that sit between Selenium and proxies make it easier to handle health checks, failover, and autoscaling under load.

Scaling and reliability

We monitor proxy latency and error rates to adjust pool size. We autoscale worker instances and proxy allocations when automated scraping volume spikes. We enforce per-proxy request limits so no single IP exceeds safe thresholds.

Practical trade-offs

Frequent rotation reduces detectability but can break flows that expect a single IP for many steps. Sticky sessions protect complex interactions at the cost of higher per-proxy load. We choose a hybrid approach: use per-request rotation for bulk scraping and sticky rotation for authenticated tasks.

Handling Proxy Authentication

Adding proxies to browser automation requires careful planning for authentication. This ensures tests run smoothly without interruptions. We’ll discuss common methods, how to set them up in Selenium, and keep credentials secure.

We’ll look at four main ways to authenticate and which providers use each method.

Basic credentials use a username and password in the proxy URL. Many providers, including some residential ones, support this. It’s easy to set up and works with many tools.

IP whitelisting allows traffic only from specific IP addresses. Big providers like Luminati and Bright Data use this. It’s secure and works well for tests that run the same way every time.

Token-based authentication uses API keys or tokens in headers or query strings. Modern proxy APIs from Oxylabs and Smartproxy often use this. It gives detailed control and makes it easy to revoke access.

SOCKS5 authentication uses username and password in the SOCKS protocol. It’s good for providers that focus on low-level tunneling and for non-HTTP traffic.

Each method has its own pros and cons. We choose based on the provider, our test environment, and if we need a session sticky behavior.

To set up proxies with credentials in Selenium, we use a few methods. We can embed credentials in the proxy URL for basic auth and some token schemes. For example, http://user:pass@proxy.example:port or http://token@proxy.example:port for tokens.

Browser profiles and extensions are another option. For Chrome, we can use an extension to add Authorization headers or handle auth popups. This is useful when direct embedding is blocked or when we need a session sticky cookie.

Proxy auto-configuration (PAC) files let us route requests dynamically. They keep authentication logic out of our test code. PAC scripts are useful when we need different proxies for different targets or when combining IP whitelisting with header-based tokens.

For SOCKS auth, we configure the WebDriver to use a SOCKS proxy and provide credentials through the OS’s proxy agent or a local proxy wrapper. This keeps Selenium simple while honoring SOCKS5 negotiation.

We should store credentials securely instead of hard-coding them. Use environment variables or a secrets manager like AWS Secrets Manager or HashiCorp Vault. Rotate username and password proxy values and tokens regularly to reduce risk if a secret is leaked.

When we need session sticky behavior, we must handle request affinity. This can be done by the proxy provider or by keeping the same connection and cookies across runs. Choosing a provider that offers session sticky endpoints helps reduce flakiness in multi-step flows.

| Authentication Method | Typical Providers | How to Configure in Selenium | Strengths |

|---|---|---|---|

| Basic (username:password) | Smartproxy, Oxylabs | Embed in proxy URL or use extension to inject headers | Simple, widely supported, quick setup |

| IP Whitelisting | Bright Data, residential services | Set allowed IPs in provider dashboard; no per-request creds | High security, no credential passing, stable sessions |

| Token-based | Oxylabs, provider APIs | Add headers via extension or PAC file; use environment secrets | Fine-grained control, revocable, scriptable |

| SOCKS5 with auth | Private SOCKS providers, SSH tunnels | Use OS proxy agent or local wrapper to supply SOCKS auth | Supports TCP traffic, low-level tunneling, SOCKS auth support |

Troubleshooting Common Proxy Issues

When proxy connections fail, we start with a set of checks. We look at network diagnostics, client logs, and run simple tests. This helps us find the problem quickly and avoid guessing.

We check for connection timeouts and failures. We look at DNS resolution, firewall rules, and if we can reach the endpoint. We also increase timeouts in Selenium and add retry logic.

Signs of ip bans and rate limiting include HTTP 403 or 429 responses and CAPTCHA prompts. We lower request frequency and add delays. We also switch to residential IPs if needed.

Debugging proxy settings means capturing browser logs and checking headers. We verify SSL/TLS handling and test the proxy with curl. This helps us see if the problem is in the network or our setup.

We use logging and monitoring tools to track proxy health. This lets us spot patterns related to rate limiting and outages. We can then remove bad endpoints and improve rotation policies.

Below is a compact reference comparing common failure modes and our recommended fixes.

| Issue | Common Indicators | Immediate Actions | Long-term Mitigation |

|---|---|---|---|

| Connection timeouts | Slow responses, socket timeouts, Selenium wait errors | Increase timeouts, run curl test, check DNS and firewall | Use health checks, remove slow proxies, implement retry with backoff |

| Provider outage | Multiple simultaneous failures from same IP pool | Switch to alternate provider, validate endpoints | Maintain multi-provider failover and automated pre-validation |

| IP bans | HTTP 403, CAPTCHAs, blocked content | Rotate IPs immediately, reduce request rate | Move to residential IPs, diversify pools, monitor ban patterns |

| Rate limiting | HTTP 429, throttled throughput | Throttle requests, add randomized delays | Implement adaptive rate controls and smarter ip rotation |

| Proxy misconfiguration | Invalid headers, auth failures, TLS errors | Inspect headers, verify credentials, capture browser logs | Automate config validation and keep credential vaults updated |

Performance Considerations with Proxies

Choosing the right proxy can make our Selenium tests run smoothly. Even small changes can speed up or slow down tests. Here are some tips to help you make the best choice.

Impact on Response Times

Proxies can make our tests slower because they add extra steps. We check how long it takes for data to go back and forth. This helps us see how different providers or locations affect our tests.

When we run tests in parallel, even a little delay can add up. We watch how long it takes for responses to come in. This helps us understand how delays affect our tests and how often they fail.

Balancing Speed and Anonymity

We mix fast datacenter proxies with slower residential ones. Datacenter proxies are quicker but less anonymous. Residential proxies are more private but slower.

We test different mixes of proxies to find the best balance. A mix can make our tests more reliable without breaking the bank. We also try to keep connections open and pick proxies close to our targets to reduce delays.

Optimization Tactics

- Choose geographically proximate proxies to cut latency and improve response times.

- Maintain warm connections so handshakes do not add delay to each request.

- Reuse sessions where acceptable to reduce setup overhead and improve throughput.

- Monitor provider SLA and throughput metrics to guide data-driven proxy selection.

Measuring and Adjusting

We regularly test how different proxies perform. We look at how long it takes for responses, how often requests succeed, and how much data we can send. These results help us adjust our proxy settings.

By keeping an eye on these metrics, we can make our tests faster without losing privacy. Regular checks help us make better choices about cost, reliability, and the right mix of proxies for our Selenium tests.

Best Practices for Using Proxies with Selenium

Using proxies with Selenium helps us automate tasks reliably and safely. We pick the right provider and avoid mistakes. Regular checks keep our proxy pool healthy. These steps are key for Selenium teams.

Selecting the Right Provider

We look at providers based on reliability, pool size, and geographic coverage. We also check rotation features, pricing, and documentation. Bright Data and Oxylabs are top choices for big projects.

It’s important to test providers to see how they perform in real scenarios. Look for session sticky support and ip rotation options that fit your needs. Good documentation and support make integration easier.

Avoiding Common Pitfalls

We steer clear of low-quality proxies that fail often. Hardcoding credentials is a security risk. We start traffic slowly to avoid getting blocked too quickly.

CAPTCHAs and JavaScript challenges need to be handled. We log proxy errors to debug quickly. This helps us fix issues fast.

Regular Maintenance of Proxy List

We regularly check the health of our proxies and remove slow ones. We also rotate credentials and track performance metrics. This keeps our proxy list in top shape.

We automate the process of removing bad proxies and adding new ones. Strategic ip rotation and session sticky use help us stay anonymous while maintaining access.

| Area | Action | Why It Matters |

|---|---|---|

| Provider Evaluation | Test reliability, pool size, geographic reach, pricing, docs | Ensures stable access and predictable costs during scale-up |

| Session Handling | Use session sticky for stateful flows; enable ip rotation for stateless | Preserves login sessions when needed and avoids detection for other tasks |

| Security | Never hardcode credentials; use secrets manager and rotation | Reduces exposure risk and eases incident response |

| Traffic Strategy | Ramp traffic gradually and monitor blocks | Prevents sudden bans from aggressive parallel runs |

| Maintenance | Automate health checks, prune slow IPs, log metrics | Maintains pool quality and supports troubleshooting |

Real-World Applications of Selenium with Proxies

We use Selenium with proxies for real-world tasks. This combo automates browser actions and manages proxies smartly. It makes web scraping, competitive analysis, and data mining more reliable across different areas.

For big web scraping jobs, we use automated flows with rotating proxies. This avoids IP blocks and lets us scrape more efficiently. We choose headful browsers for pages with lots of JavaScript to mimic real user experiences.

Rotating proxies help us spread out requests evenly. This keeps our scraping smooth and avoids hitting rate limits.

In competitive analysis, we track prices and products with geo-located proxies. We simulate local sessions to get results like a real shopper. IP rotation helps us avoid biased data and rate caps, giving us accurate insights.

We mine data from complex sites and dashboards using automated scraping and proxies. This method collects data in parallel, reducing the risk of blocks. It also makes our datasets more complete.

In user experience testing, we test from different regions to check localized content. Proxies help us confirm how content looks and works in different places. They also let us test single-user journeys consistently.

We choose between residential and datacenter proxies based on the task. For ongoing monitoring or heavy scraping, rotating proxies are key. For quick checks, a few stable addresses work well without losing anonymity.

Here’s a quick look at common use cases, proxy patterns, and their benefits.

| Use Case | Proxy Pattern | Primary Benefit |

|---|---|---|

| Large-scale web scraping | Rotating proxies with short dwell time | High throughput, reduced throttling, broad IP diversity |

| Competitive analysis | Geo-located proxies with controlled ip rotation | Accurate regional results, avoids geofencing bias |

| Data mining of dashboards | Sticky sessions on residential proxies | Session persistence for authenticated flows, fewer reauths |

| User experience testing | Region-specific proxies with session affinity | Realistic UX validation, consistent A/B test impressions |

| Ad hoc validation | Single stable datacenter proxy | Fast setup, predictable latency for quick checks |

Understanding Legal Implications of Proxy Usage

Using proxies with automated tools can bring benefits but also risks. It’s important to know the legal side to avoid trouble. We’ll look at key areas to follow in our work.

Compliance with Terms of Service

We check a website’s terms before using automated tools. Even with rotating IPs, we must follow these rules. Breaking them can lead to blocked IPs, suspended accounts, or lawsuits.

When a site’s TOS doesn’t allow automated access, we ask for permission. Or we limit our requests to allowed areas. This helps avoid legal issues related to TOS.

Respecting Copyright Laws

We don’t copy large amounts of content without permission. This can lead to DMCA takedowns or lawsuits. We only keep what we need for analysis.

For reuse, we get licenses or use public-domain and Creative Commons content. This way, we follow copyright laws and lower our legal risk.

Privacy Regulations and Ethical Considerations

We handle personal data carefully and follow privacy laws like the California Consumer Privacy Act. We minimize and anonymize data as much as possible.

We work with lawyers to understand our privacy duties. Ethical scraping helps protect individuals and our company from privacy issues.

Checklist we follow:

- Review and document site-specific terms and compliance TOS.

- Limit storage of copyrighted material; obtain permissions when needed.

- Apply data minimization, hashing, and anonymization to personal data.

- Maintain audit logs and consent records for legal review.

Future Trends in Selenium and Proxy Usage

We watch how browser automation changes and its impact on proxy use. Selenium’s updates lead to more tools like Playwright and Puppeteer. These tools make workflows more reliable and headless. Cloud-native CI/CD pipelines will mix local testing with large-scale deployment, shaping the future.

Advancements in Automation Tools

Headless browsers with anti-detection features are becoming more popular. Native browser APIs will get stronger, making tests more like real user interactions. Working with GitHub Actions and CircleCI will make delivery faster and tests more reliable.

Playwright and Puppeteer add modern APIs and context isolation to Selenium. We predict more cross-tool workflows, offering flexibility in audits, scraping, and regression testing.

The Growing Need for Anonymity

As anti-bot systems get better, the need for anonymity grows. Rotating proxies and ip rotation will be key for scaling without getting blocked. Residential and mobile proxies will be in demand for their legitimacy and reach.

We suggest planning proxy strategies for session persistence and regional targeting. This reduces noise in tests.

Innovations in Proxy Technology

Providers are using AI to score proxy health and flag bad ones. Smart session-sticky algorithms keep continuity while allowing ip rotation. Tokenized authentication reduces credential leaks and makes rotation easier.

We expect more services that include CAPTCHA solving, bandwidth guarantees, and analytics. Keeping up with proxy technology will help teams find solutions that meet their needs.

Conclusion: Maximizing Selenium’s Potential

We’ve talked about how proxies make browser automation reliable. Rotating proxies are key for keeping things running smoothly. They help avoid hitting request limits and reduce the chance of getting banned.

They also let us test from different locations and meet session-sticky needs when needed. These advantages are crucial for large-scale automated scraping and making Selenium work better in production.

When picking a proxy provider, look for clear SLAs, lots of IP diversity, and safe handling of credentials. Scaling up slowly, keeping an eye on performance, and making decisions based on data are good practices. It’s also important to watch how well things are working and follow the law and ethics.

Next, try out a Selenium workflow with proxies and do small tests to see how different strategies work. Use metrics, keep credentials safe, and add proxy tests to your CI pipelines. This will help your team grow automated scraping and Selenium projects safely and effectively.

FAQ

What is the focus of this guide on using proxies with Selenium?

This guide is about using proxies, especially rotating ones, to improve Selenium tests. It helps avoid IP bans and distribute traffic like many users. It’s for developers and teams using Selenium, covering setup, integration, and more.

Why do rotating proxies matter for large-scale automated scraping and data mining?

Rotating proxies help avoid request limits and IP bans. They spread traffic across a pool, making it look like many users are accessing. This improves success rates and allows for targeted scraping.

Who should read this listicle and what practical takeaways will they get?

It’s for engineers and teams in the U.S. using Selenium. You’ll learn about setting up proxies, choosing the right ones, and rotating them. It also covers authentication and performance trade-offs.

What exactly is Selenium and what components should we know?

Selenium automates web browsers and supports many browsers. It works with tools like Jenkins and has a big community. Knowing how it uses the WebDriver protocol is key.

How do proxies enhance privacy and anonymity in automated tests?

Proxies hide our IP, protecting our internal networks. They help avoid linking tests to one network, which is crucial for realistic testing.

When should we use session sticky (sticky IP sessions) versus per-request rotation?

Use session sticky for stateful interactions like logins. Use per-request rotation for stateless scraping. A mix of both is often best.

What proxy types are appropriate for Selenium: HTTP, SOCKS, residential, or datacenter?

HTTP proxies are common and easy to set up. SOCKS5 is good for non-HTTP traffic. Residential proxies are better at avoiding blocks but are expensive. Datacenter proxies are faster but might get blocked more.

How do we configure proxies in Selenium (Python example context)?

Set up proxies through browser options. Use host:port or username:password@host:port formats. For auth, embed credentials in the URL or use browser extensions.

What are recommended tools and providers for automatic proxy rotation?

Bright Data, Oxylabs, and Smartproxy are good options. Use proxy pool managers and middleware for health checks and failover. Choose based on coverage, SLAs, and session control.

How should we handle proxy authentication securely?

Store credentials securely in environment variables or vaults. Support different auth methods and rotate credentials often. Integrate with CI/CD pipelines to reduce risk.

What are common proxy-related failures and how do we troubleshoot them?

Issues include timeouts, DNS failures, and bans. Troubleshoot by increasing timeouts, retrying, and validating proxies. Switch to residential IPs if banned.

How do proxies affect performance and response times in Selenium tests?

Proxies can increase latency. Datacenter proxies are fast but less anonymous. Residential proxies are slower but better at avoiding blocks. Measure performance and adjust accordingly.

What best practices should we follow when selecting proxy providers?

Look at reliability, pool size, and geographic coverage. Test providers and monitor metrics. Avoid free proxies and use observability and health checks.

What real-world tasks benefit from Selenium combined with proxies?

Use it for web scraping, price monitoring, and UX testing. Proxies help avoid limits and support geo-targeted testing.

What legal and ethical considerations should guide our proxy usage?

Follow terms of service, copyright laws, and privacy regulations. Rotate proxies and anonymize data. Consult legal counsel when unsure.

What future trends should we watch in automation and proxy technology?

Look for advancements in headless browsers and cloud CI/CD. Residential and mobile proxies will become more important. Stay updated and test new tools.

What are practical next steps to get started with proxy-enabled Selenium workflows?

Start with a small pilot, test different proxy strategies, and track metrics. Use secrets managers and automate checks. Improve based on results.