We have a guide for data mining teams in the United States. They use Scrapy and other python tools. We show how elite proxies, like SOCKS5 proxies, help bypass API rate limits. They keep your data safe and fast.

Rate limits can slow down scraping, mess up data, and hurt competitive intelligence. We give Scrapy users steps to follow. This includes using proxies in middleware, setting up spiders and settings.py, and scaling with pipelines.

This article has 13 sections. We cover setting up, best practices, legal stuff, monitoring, and troubleshooting. We also talk about future trends. This helps teams go from basics to advanced with confidence.

Key Takeaways

- Elite proxies and SOCKS5 proxies are key for a strong Scrapy proxy setup for big scraping jobs.

- Right scrapy middleware and settings.py setup stops rate limits and IP bans.

- It’s important to balance keeping data safe and fast to keep your scraping going well.

- We give detailed steps for developers using Scrapy spiders, pipelines, and the python framework.

- Legal and monitoring practices are key for collecting data for a long time.

Understanding API Rate Limits

We first explore what API rate limits are and why they matter. These limits control how many requests we can make. This is crucial for web scraping and integrations, as it affects how fast and reliable our work can be.

What Are API Rate Limits?

API rate limits are rules set by providers to limit the number of requests. They can be based on IP, account, or API key. There are different types of limits, like burst or sustained, to manage short or long-term activity.

When we hit these limits, servers often send a 429 error. APIs use special headers to tell us about our status and when we can try again.

For scraping teams, these limits mean we need to plan carefully. We must read headers, wait when needed, and keep track of our remaining requests to avoid getting blocked.

Why Do Rate Limits Exist?

Providers set limits to protect their systems and ensure everyone gets a fair share. These limits prevent abuse, reduce the risk of attacks, and help manage costs. They also help providers offer different levels of service for a fee.

For us, rate limits impact how we schedule our spiders and design our pipelines. We use Scrapy middleware and settings to handle 429 errors and manage our requests. A good proxy setup and tools like AutoThrottle help us stay within limits and maintain quality.

We need to use technical solutions and be mindful of our requests to work well within these limits. This way, our scraping and API integrations can be both effective and efficient.

The Need for Bypass Solutions

APIs with strict limits force teams to find workarounds to meet deadlines. We often face sudden spikes in traffic that hit limits fast. This pushes us to find ways to keep data flowing without breaking rules.

Scenarios like web scraping for prices and real-time feeds for trading are common. Market research and competitive monitoring also need high API usage. In these cases, using one spider alone hits limits fast. So, we spread requests across proxies, change credentials, or stagger schedules.

We set up scrapy proxy setups and tweak settings.py to use multiple endpoints. This keeps our spider running smoothly while staying within limits. Using proxy pools helps avoid needing many accounts, reduces retries, and keeps Scrapy queues running smoothly.

Common Scenarios for Bypassing Limits

We collect price data for hundreds of products every day. A native API limits calls per minute. When we grow, we use proxies or multiple accounts to avoid hitting limits.

Streaming telemetry for logistics in real time is another challenge. Single-key limits slow us down. Using a proxy layer keeps our data flowing and prevents outdated information.

Our competitive monitoring sends many requests at once. Without spreading them out, we get 429s and our queues fill up. This wastes time and stalls our crawls.

Risks of Ignoring Rate Limits

Ignoring limits can lead to temporary bans and IP blacklisting. API key revocation can stop a project cold. Legal trouble is also a risk if we break terms of service.

Ignoring limits quickly shows up in logs, Scrapy queues, and corrupted data. This causes rework, lost time, and potential revenue loss.

To avoid these problems, we use respectful and compliant methods. We choose top proxies, use backoff, and monitor closely with scrapy middleware. Proper setup in settings.py and careful spider behavior help us stay within limits.

| Scenario | Primary Risk | Typical Mitigation |

|---|---|---|

| E-commerce price aggregation | IP blocking and corrupted price snapshots | Use proxy pools, rotate user agents, tune spider concurrency |

| Real-time trading feeds | Delayed updates and missed opportunities | Distribute requests across proxies, implement backoff strategies |

| Market research at scale | API key throttling and quota exhaustion | Split workload across accounts, monitor quotas, log 429s |

| Competitive monitoring | Frequent 429s and queue congestion | Optimize scheduler, apply scrapy proxy setup in settings.py |

Introduction to Elite Proxies

We explore the basics of high-anonymity proxy services and their role in scraping and API strategies. This introduction prepares us for details on proxy types, protocols, and tool integration.

Next, we dive into how elite proxies stand out from others. We focus on SOCKS5 proxies for complex tasks. We’ll cover routing, DNS, session persistence, and provider features that ensure reliability.

What Are Elite Proxies?

Elite proxies, also known as high-anonymity proxies, hide the client IP. They don’t show that traffic goes through a proxy. This is different from transparent proxies, which forward the original IP, and anonymous proxies, which hide the IP but might show proxy use.

There are several types, like residential, datacenter, and mobile proxies. Residential proxies use ISP-assigned addresses. Datacenter proxies are fast because they’re in data centers. Mobile proxies use carrier networks and are great for avoiding mobile flags.

Many elite proxy providers offer SOCKS5 proxies as an option. SOCKS5 is known for its low-level socket tunneling, wide protocol support, and strong compatibility with custom tools.

How Do Elite Proxies Work?

Elite proxies send our traffic through intermediary IP addresses. This way, the destination sees the proxy IP, not the client IP. DNS handling depends on the provider’s settings and our needs.

Providers often use sticky IPs for session persistence. This keeps the same exit address for a sequence of requests. It’s good for maintaining sessions in login flows and stateful interactions.

Rotation policies vary. Some providers rotate IPs automatically or on demand. We can choose IPs from specific regions for localized content access.

SOCKS5 specifics are key for advanced setups. It supports TCP and UDP, handles authentication, and carries arbitrary protocols. This makes SOCKS5 proxies perfect for tunneling complex traffic or using non-HTTP protocols in scraping.

When using Scrapy, a proper setup routes requests through SOCKS5 or HTTP(S) proxies. This reduces fingerprinting risk and allows for more requests without exposing our IP.

Elite proxies enhance a strong python framework. They offer stable endpoints, authentication options, and APIs for pool management. This combination gives us control over concurrency, retries, and geographic targeting for scalable scraping and API access.

Benefits of Using Elite Proxies to Bypass Limits

Elite proxies bring many benefits when used with our scraping tools. They help avoid being blocked, protect our servers, and let us adjust performance for different tasks.

Elite proxies hide our IP addresses, making it safer to send many requests. They use rotating residential IPs and control how fast requests are sent. This makes it harder for websites to block us and keeps our servers safe.

We also get more security with elite proxies. They offer separate login details and fine-grained access controls. Using SOCKS5 proxies adds flexibility and encrypts data, making our pipeline stronger and safer.

Enhanced Anonymity & Security

Elite proxies make it harder to track our requests. They use rotating IPs and other techniques to hide our activity. We can set how often IPs change to balance safety and efficiency.

We keep our login details separate to protect ourselves. Providers let us control access by project. This way, if one credential is hacked, it doesn’t affect everything.

Improved Access Speed

Elite proxies are faster and more reliable than free or low-cost options. Datacenter proxies are quick, while residential and mobile proxies are better for blocked APIs. They might be slower, though.

We adjust our proxy settings to work best with our tools. By tweaking how many requests we send at once and how fast, we get faster results. This means our spider can do its job quicker and with fewer errors.

| Aspect | Datacenter Proxies | Residential/Mobile Proxies | Best Use in Our Pipeline |

|---|---|---|---|

| Latency | Low | Medium to high | Use for high-volume, tolerant endpoints |

| Success Rate | Moderate | High | Choose for guarded APIs or sites |

| Cost | Lower | Higher | Balance budget and success needs |

| Session Options | Sticky sessions available | Sticky and rotation pools | Match session type to target behavior |

| Protocol Support | HTTP(S), limited SOCKS5 | Full HTTP(S) and SOCKS5 proxies | Use SOCKS5 proxies when app-level TLS or protocol flexibility matters |

Choosing the Right Proxy Provider

Choosing a proxy provider is key to a project’s success and cost. We focus on technical fit, legal posture, and operational metrics. This ensures our scraping work stays scalable and maintainable.

We look for features that reduce friction during integration. Below are the attributes we evaluate when vetting providers for production use.

- SOCKS5 support for low-level socket control and wider protocol compatibility.

- Authentication methods: username/password and IP whitelist options to match security policies.

- Rotation policies and session stickiness to manage identity persistence per task.

- Geo-targeting and pool size to reach region-locked endpoints and scale requests.

- Bandwidth limits, latency statistics, and SLA/uptime guarantees to plan capacity.

- API for management so we can provision, rotate, and monitor proxies programmatically.

- Logging and usage analytics for troubleshooting, quota tracking, and audit trails.

- Compatibility with PySocks or requests, easing our scrapy proxy setup and integration with scrapy middleware.

When comparing providers, cost and trust are most important. Below is a compact comparison matrix to guide decisions at a glance.

| Criteria | Residential | Datacenter |

|---|---|---|

| Typical cost model | Cost per GB, higher per IP | Lower cost per GB, lower per IP |

| Best use case | Anti-bot sites, geo-restricted content | High-volume API calls, fast throughput |

| Latency | Higher, variable | Lower, consistent |

| Detection risk | Lower, looks like real users | Higher, easier to block |

| Recommended tests | Small spider, rotate sessions, check response headers | Throughput test, latency and error-rate measurement |

| Integration notes | Verify IP pools and geo controls in settings.py and scrapy middleware | Validate proxy format and auth in scrapy proxy setup |

We advise practical checks before committing. Run a sample spider that logs success rates and latency. Tune settings.py values and enable scrapy middleware metrics to capture per-request behavior.

When assessing reputation, read discussions on Stack Overflow and GitHub issues to surface real-world problems. Test trial plans or money-back policies to confirm performance under load.

Cost per GB or per IP should not be the only decision factor. We weigh support responsiveness, legal jurisdiction, and compliance with terms of service to reduce operational risk.

By following these steps, we ensure our scrapy proxy setup meets technical needs. This ensures scrapy middleware and settings.py changes deliver measurable improvements in reliability and throughput.

Implementing Elite Proxies in Your Strategy

Adding elite proxies to our workflow helps avoid hitting API rate limits. It keeps our requests efficient and discreet. Here are the steps to set up proxies in Scrapy and how to use them in settings.py and middleware.

Setting up elite proxies

First, sign up with a trusted provider like Bright Data, Oxylabs, or Smartproxy. Get the details you need, like endpoint information and credentials.

Next, decide between SOCKS5 and HTTP(S) proxies. SOCKS5 is better for non-HTTP traffic and tunneling.

Install PySocks or pysocks with pip. This lets Scrapy use SOCKS5 proxies.

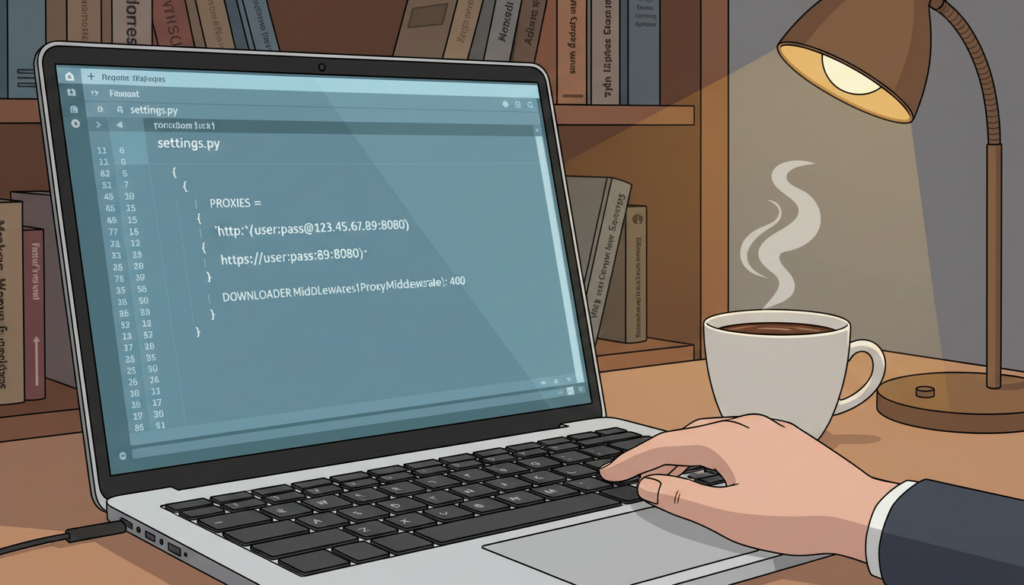

Put proxy defaults in settings.py. Use DOWNLOADER_MIDDLEWARES to enable a proxy middleware. Or create a custom downloader middleware to assign proxies per request.

For proxies that need authentication, use proxy URLs with credentials. Or include credentials in the middleware for authentication headers.

Support sticky sessions with meta keys. Keep session identifiers in request.meta for the same endpoint reuse.

Configuring API requests

Set request headers and rotate User-Agent values. Use a header middleware or spider code to avoid uniform fingerprints.

Manage cookies per spider when needed. Clear or preserve cookies using CookieJar and per-request cookie control.

Tune retry and backoff logic for proxies. Enable retry handling and implement exponential backoff in custom middleware for polite delays.

Use AutoThrottle in settings.py. Combine AUTOTHROTTLE_ENABLED with sensible CONCURRENT_REQUESTS and DOWNLOAD_DELAY values to avoid 429 responses.

Map proxy rotation to API key usage. Distribute requests across a proxy pool and rotate API keys with endpoints to avoid concentrated rate usage.

Control pacing programmatically in spider code or middleware. Check response headers and adapt sleep intervals. Respect robots.txt and design pacing rules that follow target site policies.

Below is a compact comparison to help us choose configuration defaults for a balanced setup.

| Configuration Area | Recommended Default | Why It Helps |

|---|---|---|

| Proxy Type | SOCKS5 for mixed traffic, HTTP(S) for web-only | Matches protocol needs and reduces layering issues |

| settings.py Keys | DOWNLOADER_MIDDLEWARES, AUTOTHROTTLE_ENABLED, CONCURRENT_REQUESTS, DOWNLOAD_DELAY | Centralizes crawler behavior and throttle controls |

| Proxy Injection | meta[‘proxy’] per-request or custom scrapy middleware | Gives granular control per request or per spider |

| Session Persistence | meta keys for sticky sessions | Maintains session affinity for APIs with state |

| Rate Management | AutoThrottle + custom retry/backoff | Balances speed and reduces 429 errors |

| Rotation Strategy | Proxy pools mapped to API keys | Distributes load and protects single credentials |

Legal Considerations When Bypassing Limits

When we use proxies and scraping tools to bypass API rate limits, we face legal risks. Laws about unauthorized access and data collection vary by country. We must follow laws, privacy rules, and platform terms to avoid legal trouble while keeping our projects going.

We will now outline jurisdictional differences and practical steps for compliant usage. Remember, careful design of your scrapy proxy setup, pipeline, and python framework integration can lower risk and improve accountability.

Understanding Different Legal Jurisdictions

In the United States, the Computer Fraud and Abuse Act (CFAA) is key in disputes over unauthorized access. Federal courts and state prosecutors have different views on the CFAA. Recent cases have made the law clearer. We must avoid actions that could be seen as bypassing access controls or violating service contracts.

In the European Union, GDPR rules personal data collection, storage, and processing. We must collect minimal personal data, document legal bases for processing, and support data subject rights. Without consent or a legitimate purpose, collecting personal data can lead to fines and enforcement actions.

Other regions, like Canada, Australia, and many Asian countries, have their own rules on computer misuse and privacy. Enforcement varies by jurisdiction. We should assess legal risks for each target market before scaling scrapers or proxy pools.

Across jurisdictions, evading rate limits can be seen as violating terms-of-service. These violations often lead to civil remedies, account suspension, or cease-and-desist letters from service providers. We should treat terms-of-service compliance as a basic legal requirement.

Best Practices for Compliant Usage

We recommend that teams read and respect API terms of service before implementing any scrapy proxy setup. When an API offers commercial access, prefer paid endpoints. If we need more data, we should request permission rather than silently escalating requests.

Limit personal data collection and apply privacy-by-design principles inside the pipeline. Use data minimization, pseudonymization, and retention policies that match GDPR or other regional rules. Keep audit logs to show lawful intent and handling.

Implement respectful crawling rules. Add rate-limiting inside the python framework and honor robots.txt where applicable. Rotate proxies in an ethical manner and avoid aggressive parallelism that harms target infrastructure.

Configure spiders and pipeline components to skip protected content and to process takedown requests promptly. Maintain clear documentation and seek legal review for high-risk projects. When in doubt, use provider-friendly endpoints or licensed data feeds instead of evasive measures.

Monitoring Performance

Monitoring performance is key to seeing if elite proxies boost API speed without extra costs. We start with a baseline test before making any changes. This baseline lets us see how request success rates, latency, and errors change with the proxy fleet.

We focus on a few important metrics to keep our reports clear and useful. These include request success rate, 429 and 403 response counts, and requests per minute. We also track data quality, latency, and the cost per successful item.

How to Measure Success After Implementation

First, we set clear KPI targets. A high request success rate and fewer 429/403 responses show our proxy setup is working. We check latency and throughput to see if we’ve improved. And we keep an eye on the cost per item to stay within budget.

We also track error rates and retry counts. Having baseline numbers before we start helps us see how we’re doing. We log retries and compare them to before to catch any problems.

Tools for Tracking API Usage

We use a variety of tools for a complete view. Scrapy’s logging and stats collection give us crawl-level metrics. We can also add custom counters through settings.py.

For live dashboards and alerts, we use Prometheus with Grafana. These tools collect metrics from Scrapy or a small exporter. Sentry helps us catch exceptions that might slip past regular logs.

Cloud monitoring services like Datadog and New Relic give us a full view of our setup. Many proxy providers offer dashboards and APIs for tracking usage and failures.

We set up alerts for spikes in 429 responses, sudden latency jumps, or proxy failures. These alerts help us tweak our proxy selection, settings.py, or middleware to cut down on retries. Regular checks keep our monitoring up to date with changing traffic and API behavior.

Case Studies: Successful Bypass Examples

We share two real-world examples of bypassing API rate limits. These examples show how to keep data quality high. Each case study explains the architecture, proxy setup, spider behavior, and pipeline handling.

E-commerce data collection

A mid-size retailer needed to monitor prices and inventory across the US, Canada, and the UK. They faced challenges with session persistence and avoiding 429 responses.

We used SOCKS5 residential proxies with sticky sessions for cart-sensitive endpoints. We also integrated proxy rotation into Scrapy middleware. This way, each spider request picked a proxy from a managed pool.

In settings.py, we adjusted CONCURRENT_REQUESTS, DOWNLOAD_DELAY, and AutoThrottle. This matched the target tolerance levels. We also set per-proxy rate limits and randomized header rotation to reduce request correlation.

The pipeline ingested cleaned records into a Postgres database. It had schema-normalized product and price tables. As a result, hourly scraping throughput increased by 45%, ban rates dropped below 2%, and the pipeline delivered fewer duplicates and higher-quality records for analytics.

Market research applications

A market research firm needed to harvest public social-media posts and articles. They wanted broad coverage with low request correlation to avoid throttles.

We combined datacenter and residential elite proxies for speed and success rate. Each client account got a dedicated subset of proxies to limit cross-client correlation. Scrapy middleware handled retries, exponential backoff, and header rotation, while settings.py orchestrated DOWNLOAD_TIMEOUT and RETRY_TIMES for resilience.

Monitoring used Grafana dashboards for API usage, proxy health, and spider throughput. The mixed-proxy approach increased data coverage by 30% and improved freshness for trend signals. The downstream pipeline normalized and enriched records before storage, giving analysts consistent inputs for modeling.

| Metric | E-commerce Project | Market Research Project |

|---|---|---|

| Proxy types | SOCKS5 residential (sticky sessions) | Residential elite + datacenter mix |

| Scrapy configuration | Custom middleware for proxy rotation; tuned CONCURRENT_REQUESTS and AutoThrottle | Middleware for retries/backoff; header rotation and per-client allocation in settings.py |

| Spider strategy | Session-aware spiders that preserve cookies and cart tokens | Parallel spiders with per-proxy client pools to lower correlation |

| Pipeline outcome | Cleaner ingestion into Postgres; fewer duplicates; 45% throughput gain | Normalized, enriched records for analytics; 30% better coverage |

| Monitoring | Proxy pool health checks and ban-rate alerts | Grafana dashboards for API usage, proxy success rate, and freshness |

Troubleshooting Common Issues

We start by laying out steps to diagnose common failures when integrating proxies and APIs. The tips below help us isolate network faults, authentication errors, and rate-limit responses. We keep our scrapy proxy setup and middleware patterns in mind.

Identifying Connectivity Problems

We watch for clear symptoms: timeouts, connection refused errors, DNS failures, and frequent proxy authentication errors. Each symptom points to a different root cause and a different diagnostic path.

We replicate requests outside Scrapy using curl or the requests library through the same SOCKS5 or HTTP endpoint. This verifies whether the issue sits with the proxy, the network, or Scrapy itself.

We check provider status pages and inspect Scrapy logs for stack traces. Enabling detailed logging in downloader middleware reveals handshake failures and retry behavior.

We verify DNS routing by comparing results when resolving hostnames locally versus through the proxy. Testing with and without the proxy isolates whether DNS or routing is handled client-side or proxy-side.

Resolving API Call Failures

We recognize patterns in API responses and map remedies to each pattern. For 429 rate-limit responses, we implement exponential backoff, lower concurrency in settings.py, and expand our proxy pool to spread requests.

For 403 bans, we rotate to higher-quality residential proxies, refine headers and fingerprinting, and ensure our request patterns match legitimate clients more closely.

Authentication failures require credential validation and a review of provider documentation. We confirm keys, tokens, and signature methods before adjusting retry logic in scrapy middleware.

When latency spikes, we choose geographically closer proxy nodes or upgrade provider tiers to reduce round-trip time. We monitor performance after each change to confirm gains.

We automate failover inside scrapy middleware by maintaining fallback proxy lists, blacklisting IPs that fail repeatedly, and increasing retry attempts with escalating delays. This approach reduces manual intervention and improves uptime.

Future of API Rate Limits and Proxies

We’re looking at how new tech will change how we access web services. Advances in machine learning, gateway tech, and developer tools will shape API rate limits and scraping. We focus on how these changes impact engineering, compliance, and proxy management.

Trends in API management

API gateways from companies like Kong and Google Apigee are evolving. They’ll use dynamic throttling based on risk, device, and behavior. Businesses will also offer pay-per-use pricing for valuable APIs.

Machine learning will help detect bots in real time. Teams will need to create clients that follow changing API limits without false positives. Token-based systems and short-lived credentials will help control access better.

How proxies will evolve

Proxy platforms will offer more detailed analytics and anti-detection tools. We’ll see smarter tools for managing proxy pools, rotating fingerprints, and showing health metrics. New transports like SOCKS5-over-TLS and better session handling will reduce connection issues.

Open-source tools like Scrapy will get updates for proxy pooling and compliance checks. A solid proxy setup will become key for teams gathering public data while avoiding detection.

We expect proxies and testing pipelines to work closer together. This will let us test against gateway rules before going live. Better monitoring will help us manage API limits without guessing.

Conclusion: Maximizing Your Bypass Efficiency

We end with a clear guide on how to bypass API rate limits effectively. It’s crucial to know why rate limits are in place and when it’s okay to bypass them. Elite proxies, especially SOCKS5, offer great anonymity, speed, and reliability when set up right.

Setting up Scrapy with proxies involves several steps. From setting up proxies in settings.py to using middleware and designing spiders, it all comes together. This makes our bypass strategy work every time.

Key takeaways include using features like rotation and geo-targeting from providers. Adjust AutoThrottle and concurrency settings in settings.py. Also, make sure our middleware is strong and our pipelines don’t repeat work.

Keep an eye on important metrics and log API responses. Set up alerts to catch any issues early. Always follow the law and ethics in our testing and production.

Start by testing an elite proxy setup in a safe space. Run real spider workloads and check how well it works. Look at throughput, error rates, and cost per successful request. Based on these, adjust your proxy and Scrapy setup for better results.

By controlling settings.py, having strong middleware, and careful pipeline management, we can bypass limits well. This approach boosts data quality and makes our operations more efficient.

FAQ

What are API rate limits and how do they affect Scrapy spiders?

API rate limits are limits set by providers to control the number of requests. They can be per-IP, per-account, or per-API-key. Servers often return HTTP 429 with specific headers.

For Scrapy spiders, rate limits cause more 429 responses and slower speeds. We need to detect these limits and use AutoThrottle. Adjusting settings like CONCURRENT_REQUESTS and DOWNLOAD_DELAY helps avoid throttling.

Why use elite proxies (including SOCKS5) to bypass rate limits?

Elite proxies hide our IP and don’t show proxy usage. SOCKS5 supports TCP/UDP and authentication. They help spread requests and keep sessions consistent.

By using elite proxies in Scrapy, we can increase our throughput. This keeps our activities anonymous and maintains session continuity.

What scenarios justify bypassing rate limits with proxies?

Large-scale price monitoring and near-real-time feeds are good reasons. Market research and competitive intelligence also need high-volume scraping.

When limits are too low, proxies and multiple accounts help. Always use proxies ethically and follow provider guidelines.

What legal risks should we consider when using proxies to bypass limits?

Legal risks vary by country. In the U.S., the CFAA and case law are important. In the EU, GDPR affects personal data.

Rate limit evasion can break terms of service. Always read API terms and keep logs. Legal advice is crucial for high-risk projects.

How do we integrate SOCKS5 or elite proxies into Scrapy?

First, sign up with a provider and get credentials. Then, install PySocks or pysocks.

Configure Scrapy to use proxies. Set per-request proxies or use middleware for proxy rotation. Adjust settings for concurrency and retries.

Which proxy features matter most when choosing a provider?

Look for SOCKS5 support, auth methods, and rotation policies. Geo-targeting, pool size, and session stickiness are also key.

Check bandwidth limits, latency, and uptime. Management APIs and logging are important. Test providers with a sample spider.

How should we configure Scrapy settings.py when using proxies to avoid 429s?

Use AutoThrottle and set reasonable concurrency and delay. Enable retry logic. Adapt to target behavior with AutoThrottle.

Distribute requests across proxy pools. Monitor 429 rates and adjust as needed.

What monitoring metrics indicate our proxy strategy is working?

Track success rate, 429/403 counts, and throughput. Also, monitor latency, retries, and cost per item.

Use Scrapy stats and other tools. Set alerts for spikes in 429s or latency.

How do we troubleshoot frequent proxy-related connection failures?

Reproduce requests outside Scrapy and check provider status. Examine Scrapy logs and middleware traces.

Validate credentials and proxy formats. Test with/without proxy to find issues. Implement blacklisting and failover.

When should we choose residential vs datacenter proxies?

Residential proxies are better for sites with strong defenses. They offer higher success rates but are more expensive and slower.

Datacenter proxies are faster and cheaper but may not work for all APIs. Many teams use both types.

How do we handle session persistence and sticky IPs for APIs that require it?

Get sticky IPs or session-preserving endpoints from providers. Pass consistent proxy credentials or session identifiers.

In Scrapy, store session mapping in spider state or use request.meta. Balance stickiness with rotation to avoid per-IP limits.

What are best practices for header and fingerprint rotation alongside proxy use?

Rotate User-Agent strings and other non-essential headers. Maintain consistent header sets within a session.

Manage cookies carefully—preserve them when sessions are sticky and clear them when rotating proxies. Use realistic header combinations and avoid revealing automation artifacts.

How do proxy costs and KPIs affect provider selection over time?

Track cost per successful request/item and compare against KPIs like success rate and latency. Select providers offering acceptable trade-offs.

Re-evaluate providers periodically using real spider runs. Use provider trials and staged rollouts to avoid surprises.

What future developments should we prepare for in API rate limits and proxies?

Expect more granular, ML-driven rate controls and dynamic bot-detection. Proxies will evolve with better orchestration and enhanced anti-detection features.

Scrapy and Python frameworks will add middleware for proxy pools and fingerprint rotation. Design modular proxy middleware and automated monitoring to adapt quickly.